“Almost any question can be answered cheaply, quickly and finally, by a test campaign. And that’s the way to answer them – not by arguments around a table.”

Claude Hopkins,“Scientific Advertising” (published in 1923)

Experimentation is a crucial part of innovation, and some would argue that there’s no innovation without experimentation. If innovation and experimentation are so closely linked together, before we can start talking about experimentation, we need to understand what innovation is. The big misconception is that innovation is about new ideas: as long as we have ideas, everything else will magically get solved. We associate innovation to colourful post-its and countless brainstorming sessions. Whereas searching for novel ideas is part of innovation and the process, it is not the real challenge and the most challenging part of innovation. Understanding innovation as “the best idea” is a myth and it’s not only a too narrow and simplistic understanding, but it’s also harmful.

The Business Dictionary defines innovation as: “The process of translating an idea or invention into a good or service that creates value.” The focus is on the process and the value creation, instead of ideas or inventions and this is where experimentation comes into the picture. It is very rare to have a lone inventor having a light bulb moment (another myth of innovation) leading to a successfully implemented solution. Making an idea or concept into reality, into something meaningful has thousands of variables that you can’t figure out alone or “by arguments around a table.” Experimentation is fundamental to get insights and new knowledge. So, in relation to innovation, experimentation can be seen as a “search for new value” (Zevae M. Zaheer), a journey to innovation.

There are a countless number of approaches to experiment, i.e. exploring opportunities, identifying opportunities, gathering feedback, testing and evaluation ideas/solutions, translating ideas into solutions. Have you heard of Randomized Controlled Trials (RCT)? How about prototyping? Minimum Viable Product (MVP)? Design Thinking? Human-Centered Design? No wonder there’s confusion on what we actually mean when we talk about innovation or experimentation. In 2017, we made a strategic move to incorporate the word experimentation into how we describe our innovation process. This decision was made to begin stripping down the innovation jargon used in our communications. Experimentation is a bit like innovation, a word that can mean different things to different people and in the worst case, it is just an empty word without meaningful intent. However, experimenting itself doesn’t need to be complicated, in the purest form it is about trying things out in small-scale. We don’t need to know the extensive experimentation vocabulary to test our ideas or to experiment. We can spend ages on brainstorming good (or bad) ideas, but without testing them, they are just concepts without any evidence to prove that they would work. So, the question to ask is not “what’s your idea?” but “how have you tried to test it?”

Why do we need experimentation?

There are three (+1) main reasons why experimentation is essential.

1. We learn.

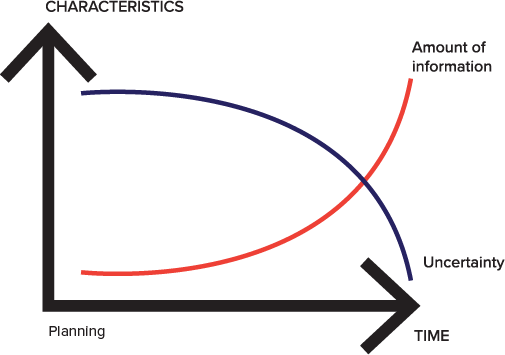

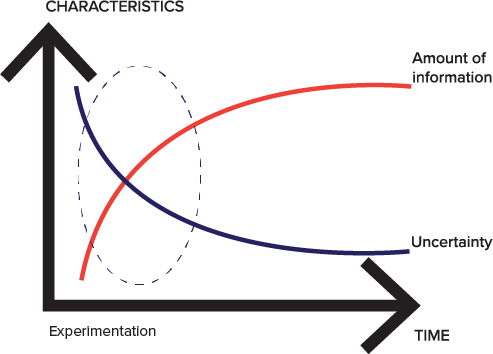

Experimentation is all about learning. It is about answering your questions and testing your assumptions. It is about gathering data. Experimentation helps us to make more informed decisions about our ideas and projects. A common mistake is that people take their idea and run with it without testing the assumptions behind the concept. We think we know, but quite often we don’t know, we just assume. Still, we make decisions based on how we think things are, without testing any of our assumptions. Many things may feel obvious, but it is always good to test them. We shouldn’t be afraid of experiments as they help us gather necessary information and thus, to become more certain. Experimentation helps us to navigate in the avoidable uncertainty that is part of any innovation process.

2. We fail (in a positive way).

Failing is part of innovation, but there should be a recognition of how one fails and how big your failure is. Failures can cost time and money; big failures can cost a lot of time and money. So, “the key is to fail quickly and cheaply, spend a little to learn a lot” (Vijay Govindaran, Professor, Tuck School of Business). Experiments can help you to do that. By trying things out at small scale and as early as possible, you experience temporary mini-failures that provide you with a lot of information and thus, help you to avoid making significant failures that could get your (untested) projects failing disastrously.

3. We save money.

Running experiments doesn’t need to be expensive as there are several ways to test your assumptions and inexpensively run small-scale experiments. Another way to think about the costs of experiments, is to consider how much does not experimenting cost. Too often projects are rolled out without much trialling. Without testing ideas, products or services, we might end up having large (untested) projects that fail to deliver. What are the costs of failed projects? It would be wrong to say that experimenting doesn’t have costs involved, it does. Even small-scale experiments take up resources from something else. However, equally, it would also be wrong to think that doing nothing, or waiting, would be risk-free. It simply is not. Ultimately, doing nothing is also a decision that can cost us money. So yes, there’s a risk of doing, but there’s also a risk of not doing.

4. We have better products and services.

Our chances to deliver excellent services are better if we really understand what kind of services people need and want. Instead of guessing what’s working and what’s not working, we can make adjustments based on real feedback or even kill off an idea that we thought would be a good one, but our users, refugees, didn’t agree. User-involvement leads to better services.

Planning vs experimenting

A traditional approach to design interventions is to plan, prepare and execute. This is a great methodology when we are executing something we are familiar with and operating in an environment we are familiar with. It doesn’t mean that there wouldn’t be risks involved, but in such situations, we can assume that if we study enough, we will know. There’s a fundamental difference between risk and uncertainty, as explained by Marco Steinberg “risk is probability, uncertainty is lack of probability”. Known solutions or environments have risks that can be calculated and managed, but if we are really to do something new, we don’t know the risks. We don’t even know what we don’t know.

In addition to that, often the environment we operate is increasingly complex and uncertain, the issues we are trying to tackle too complicated for a linear process, and there are no ready-made solutions available. The traditional plan-prepare-execute approach is not sufficient, but we need other tools to deal with complexity and uncertainty. Experiments bring tangible evidence early in the process when it is still possible to change the direction without big costs. Therefore, the earlier we start experimenting and collecting information, the quicker we can reduce the level of uncertainty (see ”experimenting” image).

Graphs inspired by “The Service Innovation Handbook” by Lucy Kimbell.

How do you experiment?

Every experiment condition or context is different, so there is not necessarily a one-size-fits-all type of guidance to experimentation. There are tools, techniques and protocols available, but still, it can be challenging to get into the nitty-gritty of experimentation and get started. However, we have identified a few main steps that can help us guiding with experiments.

The basic idea is simple, to do the least amount of work, and get the most amount of information. Here are six steps to get you started:

- 1) Define your purpose. Any experiment needs to have a clear purpose. Ask yourself, why are you running this experiment? A good experiment will tell you something, even if it’s something negative. If you already know the outcome, it is not an experiment and if you are not going to introduce any changes anyway, then there’s no reason to run an experiment.

- 2) List your assumptions. Be clear on the difference what you know and what you assume. Ask yourself, what do I know about my idea or solution? How do I know? What do I assume? Then, start by listing assumption and/or questions you have about your idea or solution. What kind of assumptions do you have? What are the things you are unsure about or don’t know? List them all.

- 3) Identify the most critical assumptions. We have lots of assumptions on any idea or solution, but it would be difficult to test them all at once. Focus on testing just the critical ones. For example, you can weight each assumption on an individual scale and then prioritise the ones that you need to get right, or otherwise, the result will be a failure. Another useful tool to prioritise assumptions is to use a matrix. Prioritise the assumptions keeping you awake at nights!

- 4) Design and run your experiment. Whatever you do, keep it simple. Design your experiment so that you can start tomorrow and put a (not too long!) timeframe on it. The idea is to collect as much as information with as little effort as possible. Forget surveys and market research, run your experiment with real people in an actual setting. People are funny creatures – they may say one thing and then do another, so the best way to test your assumptions and find out is not necessarily to ask but to try out and see what works.

- 5) Collect data. Record everything: data you collect and record, will guide you further.

- 6) Review results and decide on next steps. Assess the impact of the experiment against its goals. What did you learn? What do you need to change? Change your idea/solution based on what you learned. Do you need to repeat your experiment? Do you need a new experiment? Will you move forward with the solution or do you need more data? Decide how you are going to move on.

Sounds easy? Well, yes and no. Designing and running small-scale experiments and tests doesn’t need to be complicated. In the simplest form, it is just about “trying things out”. However, what makes it hard is that uncertainty is an inevitable part of the process as well as the possibility to fail. Outcomes are not predictable, and we don’t want to fail, no one wants. We don’t know what is waiting for us and still, with experiments, we are asked to jump in and try something we don’t know what the outcome will be. We must be able to say, “I don’t know” even if it makes us uncomfortable. We need to be curious to constantly question and challenge our own assumptions, be open to risk and failure and have trust in the process. All this is a lot to ask and what makes it even more difficult is that it goes beyond individual innovator’s ability to experiment. It requires an enabling environment for experimentation that supports learning from mistakes and some level of risk-taking.

Most organisations embrace the idea of innovation, but either don’t understand or don’t want to understand that you can’t have innovation without the pain of experiments (including the failed ones). The biggest responsibility lies with leaders and managers to create that space where people feel comfortable to experiment, safe to take risks and to remove speed bumps in the experimenters’ way. Creating a testing mentality across the organisation is a big shift in attitudes: moving away from “only fully perfected is allowed” to a mindset of “I don’t know, but I will find out (that’s why I run an experiment)” won’t be easy. But it is the only choice we have if we wish to innovate.

Much of the inspiration and thoughts in this article came from Nesta’s previous publications. To learn more about experimentation check out:

- Better Public Services through Experimental Government

- Towards an experimental culture in government: reflections on and from practice

This essay was originally posted in the recently released report: UNHCR Innovation Service: Year in Review 2017. This report highlights and showcases some of the innovative approaches the organization is taking to address complex refugee challenges and discover new opportunities. You can view the full Year in Review microsite here.